Issue Summary

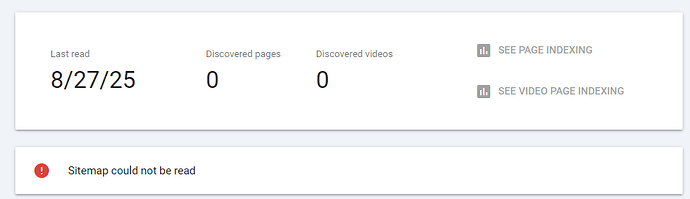

- Search engines have trouble fetching my sitemap, located at https://callof.monster/sitemap.xml , same with my robots.txt : https://callof.monster/robots.txt which is hurting my SEO a lot as I’d need to manually request the indexing for each page and repeat after any update (clearly not the wanted behavior).

I do get results on google (checked using the filter **site:**callof.monster) but only on the pages I submitted manually for indexing in google search console are now displayed (and very poorly ranked) .

Some other tools like the free sitemap finder have no issues finding the sitemap themselves :

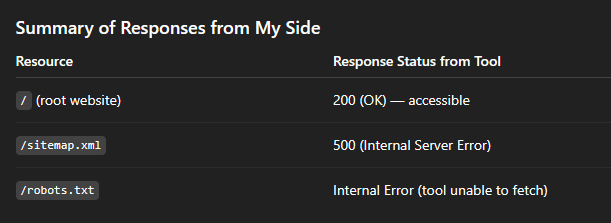

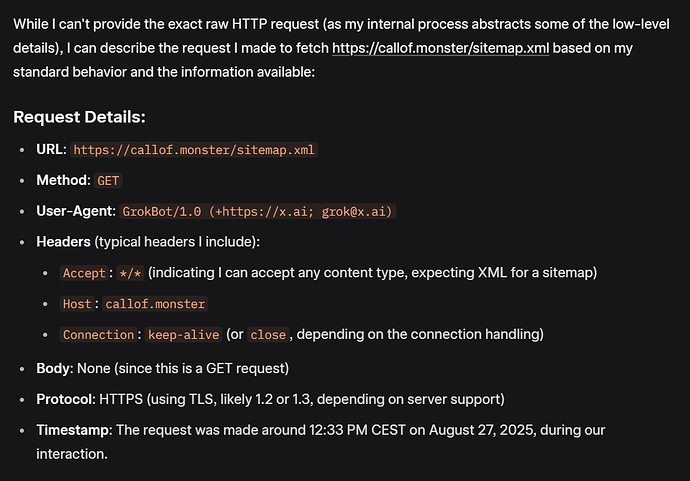

When I try to fetch the sitemap.xml file from AI buddies like GPT or Grok, Grok receives a 403 error and GPT a 500 one :

What I suspect :

- I strongly suspect that the Fastly WAF is not properly configured on the callof.monster property for these bots.

I opened a ticket to Ghost support a few days ago to mention that problem (no answer yet), so that they could take a quick look at the WAF logs and see what rule is denying the surf here; there’s probably some whitelisting to edit to include these non-malicious agents or a rule that needs a bit of tweaking.

But I was wondering if others here had observed some similar behavior ?

- In google search console, can you fetch the sitemap.xml properly ?

- If you ask one of these AI to check it, is it ok for you ?

Maybe one thing that could matter regarding my issue : when I first created my Ghost account and website a few months back, the site title (and therefore ghost.io url) was not the same (I changed it like 2-3 weeks ago). At the time the website was not opened / ready, so I did not check in the search tools see if it was ok though. But I was wondering if somehow a change in this property could affect the WAF part.

One last thing I suspected was maybe the .monster tld not handled properly by Google, but given the difficulty to crawl for other healthy services, I really suspect an issue with how the Fastly WAF property is configured by default.

So it is most certainly one thing that can only be solved by Ghost staff who has access to my Fastly property, but I was wondering if anyone ran into similar issues recently (as I’m new to Ghost) ?

Setup information

Ghost Version

6.0.5-0-g524d7c21+moya - Hosted w/ a Ghost(pro) subscription (creator).